Feature flags & experiments

Monitor errors as you roll out features or run experiments and A/B tests.

If you practice progressive delivery by using feature flags and experiments (including A/B tests) to manage rollout of new features, you can use BugSnag to monitor the impact of the rollouts on your app’s stability.

BugSnag can be used to monitor the impact on your app’s stability as you roll out features and run experiments. By declaring active feature flag and experiment usage in your notifier you can:

- monitor errors that may have been introduced by new features or experiment variants on the features dashboard.

- filter the inbox and timeline to identify what features or experiment variants may be linked to an increase in errors.

- fix errors faster by understanding what features and experiment variants were active at the time of an error.

What are feature flags and experiments?

- Feature flags are used to control access to a new feature, initially to a small group of users and eventually to all users if effectiveness and stability goals are met.

- Experiments are an extension of feature flags where one or more variants are compared to a control group. When the experiment is complete, the most effective variant that meets stability goals will be rolled out to all users.

Feature flags and experiments can be implemented in-house or for more control you can use a 3rd-party provider like Split.io or LaunchDarkly. We give instructions on how to integrate with these libraries within the platform guides.

Declaring feature flag and experiment usage

Feature flag and experiment usage is declared through your BugSnag notifier. See the documentation for your platform for details:

- Android

- Electron

- iOS

- JavaScript ( browser | Node.js )

- PHP ( Laravel | Lumen | Symfony | Other )

- Python ( ASGI | Bottle | Celery | Django | Flask | Tornado | WSGI | Other )

- React Native ( Expo | React Native )

- Ruby ( Que | Rack | Rails | Rake | Sidekiq | Sinatra | Other )

- Unity

- Unreal Engine

For updates on when support will be available for other platforms, please get in touch with us.

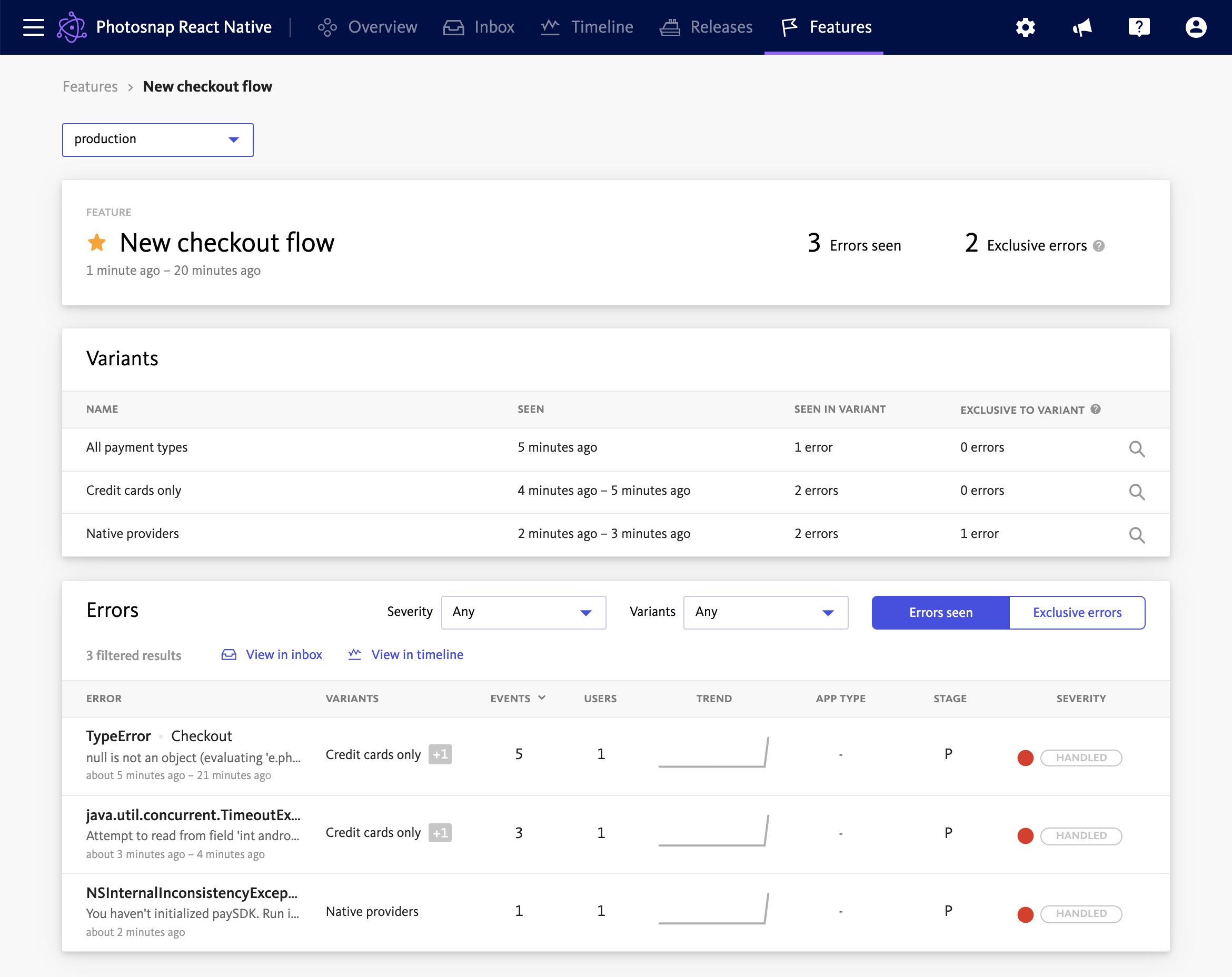

Features dashboard

The features dashboard is available on Enterprise plans.

The features dashboard is used to monitor errors impacting feature flags and experiments that you are running in your app.

Seen and exclusive errors

The feature details page shows errors that occurred when a feature flag or experiment was active:

- Seen errors are all errors that occurred while a feature flag or experiment was active.

- Exclusive errors are errors that only occurred while a feature flag or experiment was active, and did not occur when the feature flag or experiment was not active. Exclusive errors should be investigated to determine if they have been introduced by the feature flag or experiment code.

Additionally, Exclusive errors to a variant have only impacted members of a single variant. Exclusive errors to a variant are by definition exclusive to the feature flag or experiment overall, but errors exclusive to a feature flag or experiment overall are not necessarily exclusive to a variant; they may have occurred in multiple or all variants.

In order to get value from Exclusive errors, you should only record feature flag or experiment usage when they are having an impact on functionality. In other words do not record the “off” or “control” type variants. This will allow Exclusive errors to highlight those feature flags or experiments that have actually caused the error.

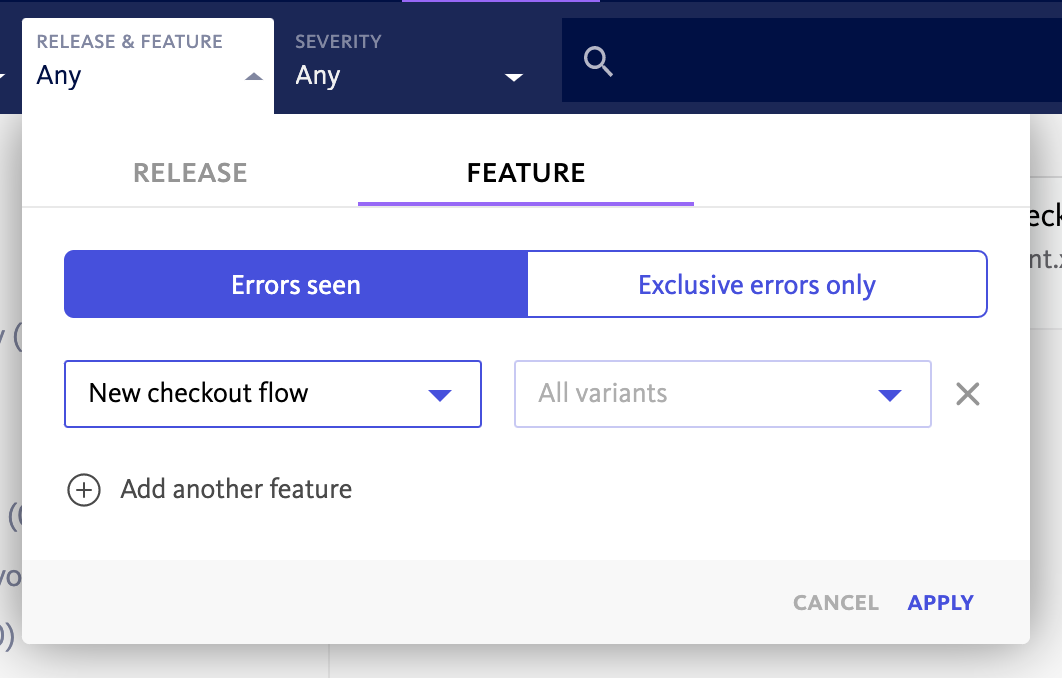

Filtering and notifications

Feature and experiment filtering is available on Enterprise plans.

You can search for errors that occurred while a feature flag or experiment variant was active using the “Releases and Features” filter from the search bar.

By creating a saved filterset with your current filters, you can then create notifications and issue tracker automations for errors occurring while a feature flag or experiment variant is active.

Maximum features and experiments

By default, each event can include up to 100 active features and experiments. There is no limit on the number of different variant values that can be used.

If the active features and experiments limit is exceeded for an event:

- all features and experiments will continue to be shown on the error details page.

- the first features and experiments up to the limit will be available on the Features dashboard, or filterable on the Inbox and Timeline.

- conversely, for events where a given feature or experiment is beyond the limit the event will not be included in search results or pivot tables when filtering by that feature or experiment.

To increase the number of active features and experiments available to your organization, please get in touch with us.